[root@localhost ~]#ntpdate www.gsm-guard.net

[root@localhost ~]#vim /etc/hosts 192.168.208.5 hd1 192.168.208.10 hd2 192.168.208.20 hd3 192.168.208.30 hd4 192.168.208.40 hd5 192.168.208.50 hd6

[root@localhost ~]# systemctl stop firewalld [root@localhost ~]# systemctl disable firewalld [root@localhost ~]# sed -ri 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config

[root@localhost ~]#firefox http://www.gsm-guard.net [root@localhost ~]#tar xf jdk-8u191-linux-x64.tar.gz -C /usr/local [root@localhost ~]#mv /usr/local/jdk1.8 /usr/local/jdk

[root@localhost ~]#ssh-keygen -t rsa -f /root/.ssh/id_rsa -P '' [root@localhost ~]#cd /root/.ssh [root@localhost ~]#cp id_www.gsm-guard.net authorized_keys [root@localhost ~]#for i in hd2 hd3 hd4 hd5 hd6;do scp -r /root/.ssh $i:/root;done

[root@localhost ~]#wget https://www.gsm-guard.net/dist/zookeeper/zookeeper-3.4.13/zookeeper-3.4.13.tar.gz

后三台部署zookeeper

[root@localhost ~]#tar xf zookeeper-3.4.13.tar.gz -C /usr/local [root@localhost ~]#mv /usr/local/zookeeper-3.4.13 /usr/local/zookeeper [root@localhost ~]#mv /usr/local/zookeeper/conf/zoo.sample.cfg /usr/local/zookeeper/conf/zoo.cfg [root@localhost ~]#vim /usr/local/zookeeper/conf/zoo.cfg dataDir=/opt/data

[root@localhost ~]#mkdir /opt/data [root@localhost ~]#echo 1 > /opt/data/myid

[root@localhost ~]#vim /etc/profile.d/www.gsm-guard.net export JAVA_HOME=/usr/local/jdk export ZOOKEEPER_HOME=/usr/local/zookeeper export PATH=${JAVA_HOME}/bin:${ZOOKEEPER_HOME}/bin:$PATH [root@localhost ~]# source /etc/profile

[root@localhost ~]#www.gsm-guard.net start

[root@localhost ~]#www.gsm-guard.net status

[root@localhost ~]#www.gsm-guard.net stop

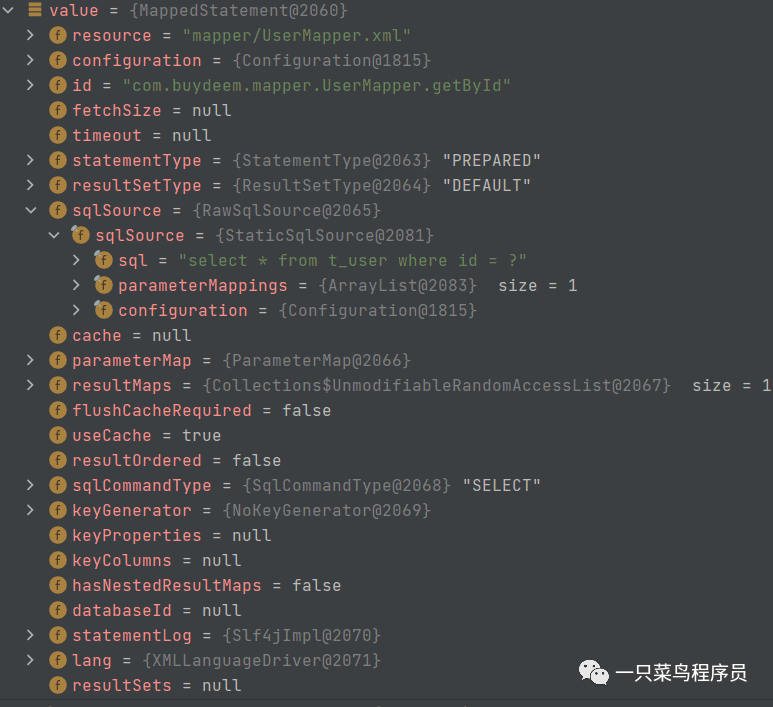

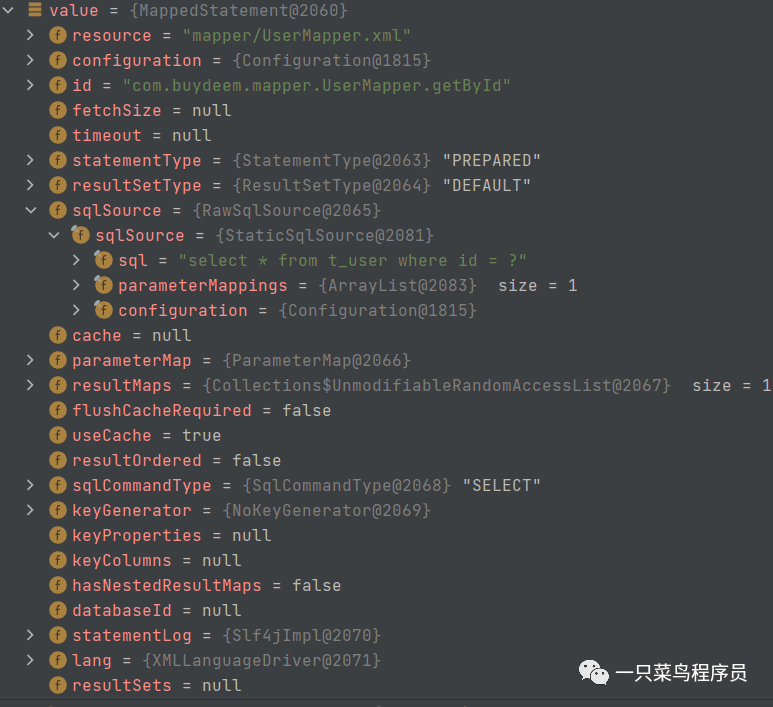

由下图可知,dataDir才是真的指定myid的目录的。可能是版本不同的原因吧

测试成功.myid不知道是否需要填写不同的值,还是都是1就可以

10.0.0.17 hd4 follower myid是1

10.0.0.18 hd5 follower myid是2

10.0.0.19 hd6 leader myid是3

[root@localhost ~]#wget http://www.gsm-guard.net/apache/hadoop/common/hadoop-2.8.5/hadoop-2.8.5.tar.gz [root@localhost ~]#tar xf hadoop-2.8.5.tar.gz -C /opt

[root@localhost ~]#vim hadoop-www.gsm-guard.net

export JAVA_HOME=/usr/local/jdk

/opt/data/tmp不存在,会自动创建

[root@localhost ~]#vim core-site.xmlfs.defaultFS hdfs://ns1 hadoop.tmp.dir /opt/data/tmp ha.zookeeper.quorum hd4:2181,hd5:2181,hd6:2181

环境基本一致,所以不需要修改,直接复制上去

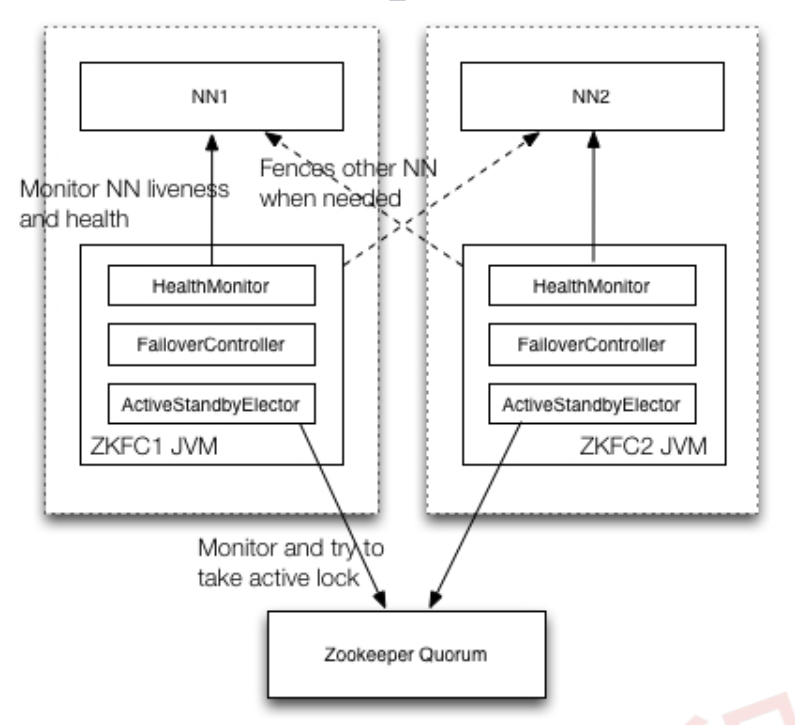

nameserver是ns1,ns1下有两个namenode:nn1,nn2;指定nn1和nn2的地址,元数据的存放位置等

[root@localhost ~]#vim hdfs-site.xmldfs.nameservices ns1 dfs.ha.namenodes.ns1 nn1,nn2 dfs.namenode.rpc-address.ns1.nn1 hd1:9000 dfs.namenode.http-address.ns1.nn1 hd1:50070 dfs.namenode.rpc-address.ns1.nn2 hd2:9000 dfs.namenode.http-address.ns1.nn2 hd2:50070 property>dfs.namenode.shared.edits.dir qjournal://hd4:8485;hd5:8485;hd6:8485/ns1 dfs.journalnode.edits.dir /opt/data/journal dfs.ha.automatic-failover.enabled true dfs.client.failover.proxy.provider.ns1 org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider dfs.ha.fencing.methods sshfence dfs.ha.fencing.ssh.private-key-files /root/.ssh/id_rsa

[root@localhost ~]#vim slaves

hd4

hd5

hd6

[root@localhost ~]#cp /opt/hadoop285/etc/hadoop/mapred-site.xml.template /opt/hadoop285/etc/hadoop/mapred-site.xml [root@localhost ~]#vim mapred-site.xmlwww.gsm-guard.net yarn

[root@localhost ~]#vim yarn-site.xmlyarn.resourcemanager.hostname hd3

yarn.nodemanager.aux-services mapreduce_shuffle

[root@localhost ~]#scp -r hadoop hdX:/opt [root@localhost ~]#scp /etc/profile.d/www.gsm-guard.net hdX:/etc/profile.d/ [root@localhost ~]#source /etc/profile

[root@localhost ~]#www.gsm-guard.net start

[root@localhost ~]#www.gsm-guard.net start journalnode

[root@localhost ~]#jps

上面那个验证查看,没有启动成功的,上面是zk的。下面这个才是jn的

[root@localhost ~]#hdfs namenode -format

格式化失败: [root@mcw04 ~]# hdfs namenode -format 23/03/11 10:13:01 INFO ipc.Client: Retrying connect to server: hd5/10.0.0.18:8485. Already tried 0 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1000 MILLISECONDS) 23/03/11 10:13:01 INFO ipc.Client: Retrying connect to server: hd6/10.0.0.19:8485. Already tried 0 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1000 MILLISECONDS) 23/03/11 10:13:01 INFO ipc.Client: Retrying connect to server: hd4/10.0.0.17:8485. Already tried 0 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1000 MILLISECONDS) 23/03/11 10:13:02 INFO ipc.Client: Retrying connect to server: hd6/10.0.0.19:8485. Already tried 1 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1000 MILLISECONDS) 23/03/11 10:13:10 WARN ipc.Client: Failed to connect to server: hd4/10.0.0.17:8485: retries get failed due to exceeded maximum allowed retries number: 10 java.net.ConnectException: Connection refused at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method) 去三个节点上查端口没有开启 [root@mcw08 ~]# ss -lntup|grep 8485 [root@mcw08 ~]# 查看配置,这个端口是那个服务 hdfs-site.xml:停止这个服务,发现没有启动 [root@mcw04 ~]# hadoop-www.gsm-guard.net stop journalnode hd6: no journalnode to stop hd4: no journalnode to stop hd5: no journalnode to stop [root@mcw04 ~]# 在hd1上启动这个服务 [root@mcw04 ~]# hadoop-www.gsm-guard.net start journalnode hd6: starting journalnode, logging to /opt/hadoop/logs/hadoop-root-journalnode-mcw09.out hd5: starting journalnode, logging to /opt/hadoop/logs/hadoop-root-journalnode-mcw08.out hd4: starting journalnode, logging to /opt/hadoop/logs/hadoop-root-journalnode-mcw07.out [root@mcw04 ~]# [root@mcw04 ~]# hadoop-www.gsm-guard.net start journalnode hd4: journalnode running as process 17035. Stop it first. hd6: journalnode running as process 16741. Stop it first. hd5: journalnode running as process 17113. Stop it first. [root@mcw04 ~]# 在hd4-6的JournalNode节点上查看,服务已经启动 [root@mcw07 ~]# jps 16627 QuorumPeerMain 17140 Jps 17035 JournalNode [root@mcw07 ~]# hd1这个namenode上格式化 [root@mcw04 ~]# hdfs namenode -format 将hd1格式化生成的目录传输到hd2,省去hd2格式化,两个namenode都有这个元数据了 [root@mcw04 ~]# ls /opt/data/ tmp [root@mcw04 ~]# ls /opt/data/tmp/ dfs [root@mcw04 ~]# ls /opt/data/tmp/dfs/ name [root@mcw04 ~]# ls /opt/data/tmp/dfs/name/ current [root@mcw04 ~]# ls /opt/data/tmp/dfs/name/current/ fsimage_0000000000000000000 fsimage_www.gsm-guard.net5 seen_txid VERSION [root@mcw04 ~]# [root@mcw04 ~]# scp -rp /opt/data hd2:/opt/ VERSION 100% 212 122.4KB/s 00:00 seen_txid 100% 2 3.1KB/s 00:00 fsimage_www.gsm-guard.net5 100% 62 100.4KB/s 00:00 fsimage_0000000000000000000 100% 321 728.1KB/s 00:00 [root@mcw04 ~]# dfs.namenode.shared.edits.dir qjournal://hd4:8485;hd5:8485;hd6:8485/ns1

[root@localhost ~]#hdfs zkfc -formatZK

[root@localhost ~]#www.gsm-guard.net

[root@localhost ~]#www.gsm-guard.net

NameNode1:http://hd1:50070 查看NameNode状态 NameNode2:http://hd2:50070 查看NameNode状态 NameNode3:http://hd3:8088 查看yarn状态

第三个页面访问失败。hd1上启动的yarn,但是ResourceManager没有启动

之前在hd1上执行开启yarn,但是ResourceManager没有启动,在hd1上先停止。然后在hd3也就是主机6上开启,然后就成功开启了但是ResourceManager和NodeManager。我们之前规划的就是主机6作为ResourceManager,因此,我们配置里面应该是也有体现的吧。

再次访问,可以看到已经成功访问了

=====

点击链接的时候,是我笔记本上,没法解析。只能给自己笔记本添加一下解析才能访问了

===

===

====

=======

创建文件夹

从这里创建失败,没有权限。可以去使用命令行创建。